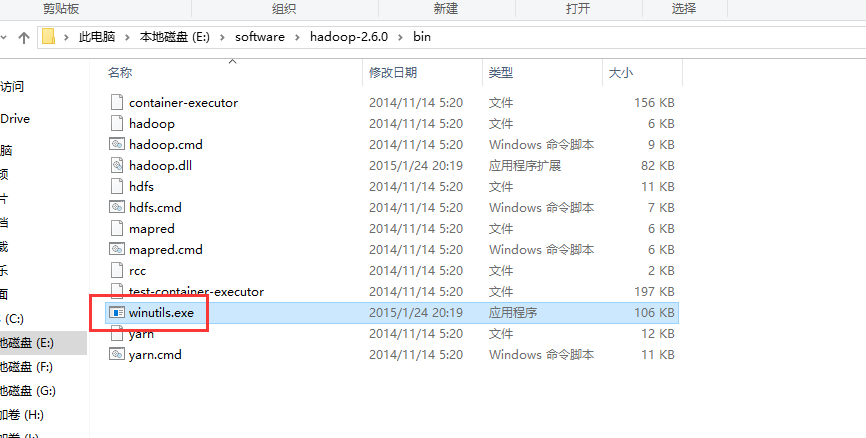

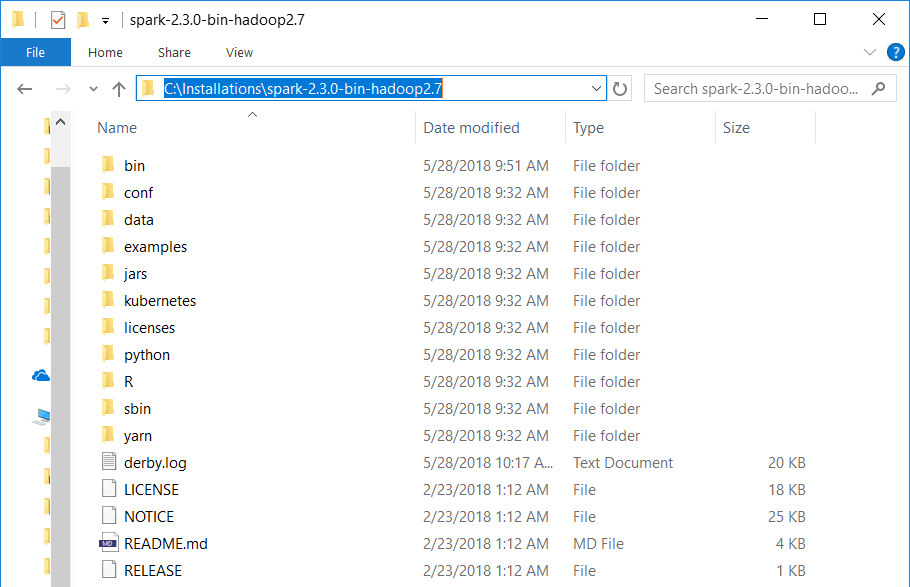

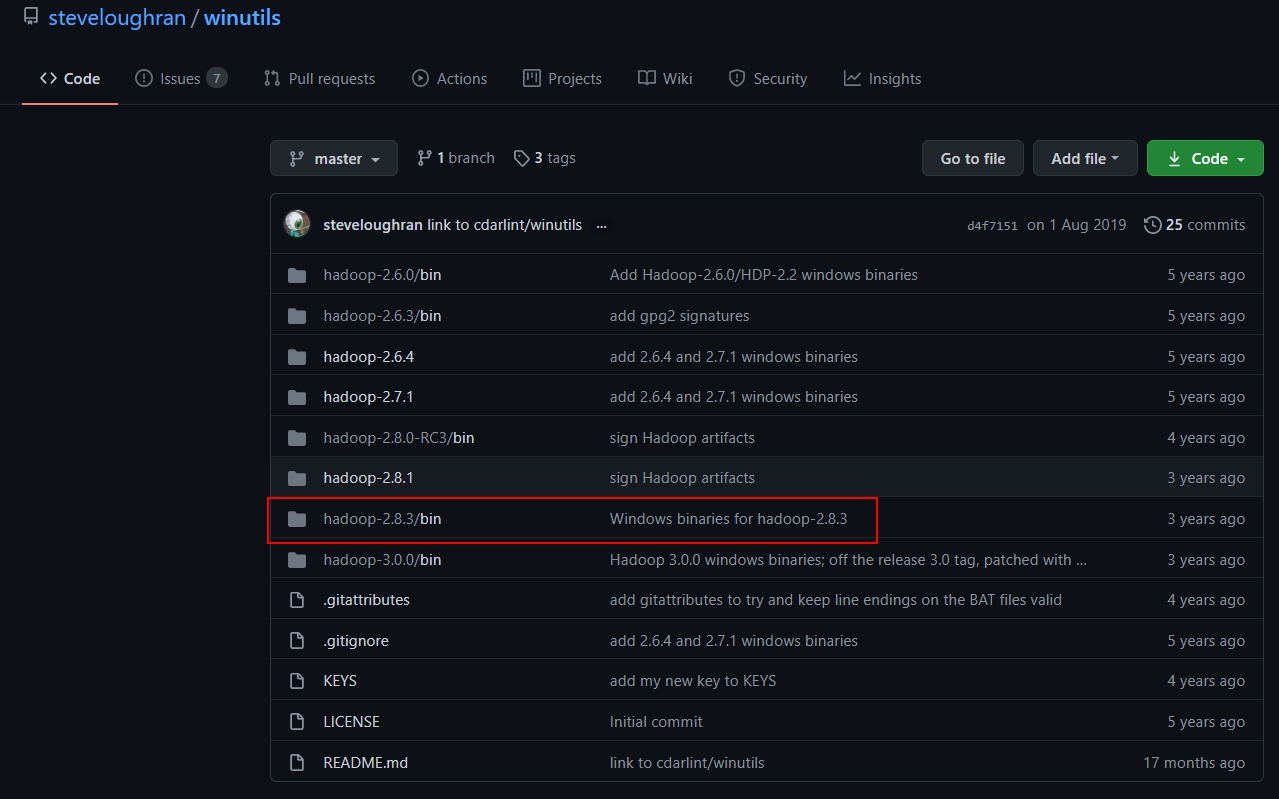

As all the input data for Spark is stored in CSV files in my case, there is no point of having an higher security in Spark. From Hadoop's Confluence Page itself - Hadoop requires native libraries on Windows to work properly -that includes accessing the file:// filesystem, where Hadoop uses some Windows APIs to implement posix-like file access permissions. But in most case, if you are running Spark on Windows it’s just for an analyst or a small team which share the same rights. That wouldn’t be a great idea for a big Spark cluster with many users. Indeed, we are basically bypassing most of the right management at the filesystem level by removing winutils.exe. That’s all nice and well but doesn’t winutils.exe fulfill an important role, especially as we are touching something inside a package called security? It is based on hadoop 2.6.5 which is currently used by Spark 2.4.0 package on mvnrepository. While I might have missed some use cases, I tested the fix with Hive and Thrift and everything worked well. HADOOP-11404: Clarify the expected client Kerberos principal is null. # Hadoop complaining we don't have winutils.exe to fail with meaningful errors on windows if winutils.exe not found. In order to avoid useless message in your console log you can disable logging for some Hadoop classes by adding those lines below in you log4j.properties (or whatever you are using for log management) like it’s done in the seed program. Winutils Exe Hadoop S install#I basically avoid locating or calling winutils.exe and return a dummy value when needed. Follow following steps to connect Kerberosed Hive form Local Windows desktop/laptop: Install MIT Kerberos Distribution software from Configure winutils. The modifications themselves are quite minimal. Basically I just override 3 files from hadoop : I made a Github repo with a seed for a Spark / Scala program. Everything is open source so the solution just laid in front of me : hacking Hadoop. Obviously, I’m obsessed with results and not so much with issues.

Winutils Exe Hadoop S .exe#exe will provoke an unsustainable delay (many months) for security reasons (time to have political leverage for a security team to probe the code). That feel a bit odd but it’s fine … until you need to run it on a system where adding a. Nevertheless, while the Java motto is “Write once, run anywhere” it doesn’t really apply to Apache Spark which depend on adding an executable winutils.exe to run on Windows ( learn more here).

I’m playing with Apache Spark seriously for about a year now and it’s a wonderful piece of software.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed